Something shifted this week. Not in the models — in how people use them.

On Tuesday March 31, Anthropic accidentally published the full source code of Claude Code — 512,000 lines of it. Within hours, they'd sent takedown notices to 8,100 GitHub repos. By the next morning, a developer named Sigrid Jin had rewritten the core architecture from scratch. It took him a few hours. He didn't copy a single line — he used OmX, an agent workflow tool, with multiple AI agents to cleanroom-build a replacement. It became the fastest-growing repository in GitHub history.

Think about what that means. The core of a major commercial product, rebuilt from scratch by one person and a team of AI agents in an evening. The code itself wasn't the moat. It never was.

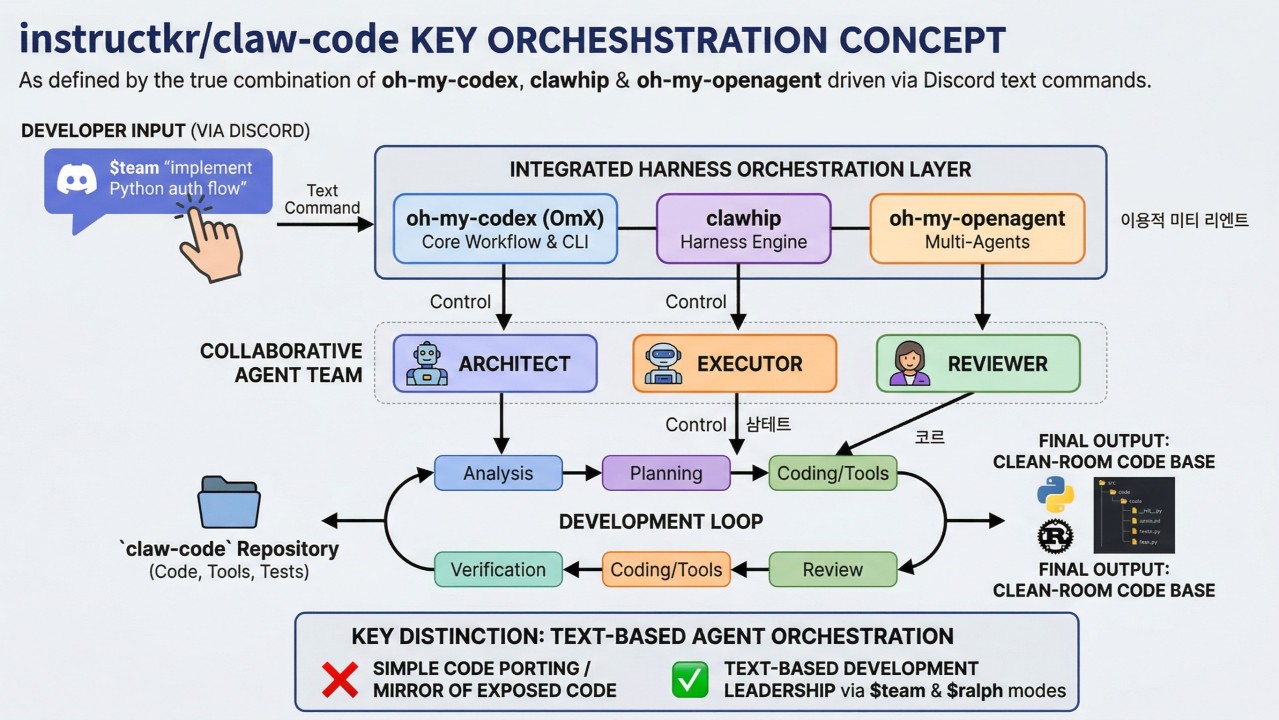

Jin wrote an article explaining what actually mattered: not the code, but the architecture that produced it. Prometheus plans the approach. Sisyphus orchestrates the work. Atlas and specialized subagents execute it. A background daemon watches git and routes events to Discord, where a human steers with a few sentences. Jin typed those sentences and put the phone down. The agents broke the work into tasks, distributed them, executed, reviewed, and merged.

The same week, Andrej Karpathy told his 2 million followers he barely writes code anymore. Instead, he uses AI to build personal knowledge bases — living wikis that grow smarter with every paper and article he feeds them. Within days, his tweet had over 18 million views. Lex Fridman replied he does the same thing — and uses mini knowledge bases for voice-mode conversations during his runs. Jack Dorsey replied “great idea file.” People started building their own versions overnight — within 48 hours, the concept had 5,000+ stars on GitHub and someone had already built “Farzapedia” (via @AYi_AInotes), feeding 2,500 journal entries to an LLM to auto-generate 417 structured wiki pages.

And buried in Anthropic's leaked code? Clues about their next big thing: a background agent called KAIROS that runs as an always-on daemon. Its “autoDream” system consolidates memory while the user is idle — merging duplicates, resolving contradictions, pruning old observations. The same pattern Jin built openly, Anthropic was building in secret.

Three different stories. One pattern: the valuable part is no longer the code. It's the system that decides what to build, how to coordinate, and what to remember.

Harrison Chase from LangChain put a name on it. He said there are three levels where agents can learn: the model (weights), the harness (the rules and tools around the model), and the context (what the agent remembers). Right now, he said, the harness is where the leverage is.

Jin said it even sharper: “A faster agent does not reduce the need for clear thinking. It increases it.”

The rest of the week tells you how fast the ground is moving. OpenAI raised $122 billion — the largest private round ever — but then shut down Sora, because even a billion-dollar company can't make video generation profitable at a million dollars a day. Microsoft launched its own AI models — even though it's OpenAI's largest investor — because depending on a single model provider is a risk nobody wants. Google gave away Gemma 4 for free under Apache 2.0, the most permissive license there is.

The money is pouring into models. But the models themselves are becoming interchangeable. The coordination layer above them is not.

01 Anthropic Accidentally Published Claude Code's Source

discuss ↗

Anthropic shipped a routine update to Claude Code. A missing line in the .npmignore config file meant the build included a 59.8 MB source map. That single file contained the full, unobfuscated TypeScript source — 512,000 lines across 1,906 files.

Chaofan Shou, co-founder of Fuzzland, spotted it at 4:23 AM ET on March 31. By morning, developers around the world were reading through Anthropic's internal code. They found 44 hidden feature flags — switches for features that haven't shipped yet.

The most interesting one: KAIROS, referenced over 150 times in the codebase. It's a persistent background daemon described as an always-on agent. Its most striking sub-system: autoDream — a memory consolidation process that runs while the user is idle, merging duplicate memories, resolving contradictions, and pruning observations to stay under 200 lines. It activates after three gates are met: 24+ hours since last cycle, 5+ sessions completed, and a consolidation lock.

Other flags included “Undercover Mode” — which strips AI attribution and internal details from commits when Anthropic employees use Claude Code on external repos — and multi-agent orchestration for coordinating parallel worker agents.

What it means

The embarrassment fades. What stays is the clearest picture yet of where Anthropic is heading: persistent agents that run in the background, consolidate what they've learned, and get better over time — without the user doing anything. If KAIROS ships, it changes what an AI coding tool means. It stops being a tool you use and becomes an assistant that thinks about your project while you sleep.

Links and reactions

Coverage

VentureBeat — “Claude Code's source code appears to have leaked”

TechCrunch — “Anthropic took down thousands of repos”

Layer5 — Full technical analysis

Latent Space — AINews breakdown

BleepingComputer — Security angle

The New Stack — Feature flag analysis

DeepLearning.AI — KAIROS and autoDream deep dive

Fireship — YouTube coverage (1.8M+ views)

Reactions

Chaofan Shou Fuzzland co-founder — Original post that broke the leak · 48K likes · 35M views

Wes Bos — “I immediately went for the one thing that mattered: spinner verbs. There are 187.” · 27K likes

himanshu — Most detailed technical breakdown of the memory architecture's three-layer design. · 6.3K likes

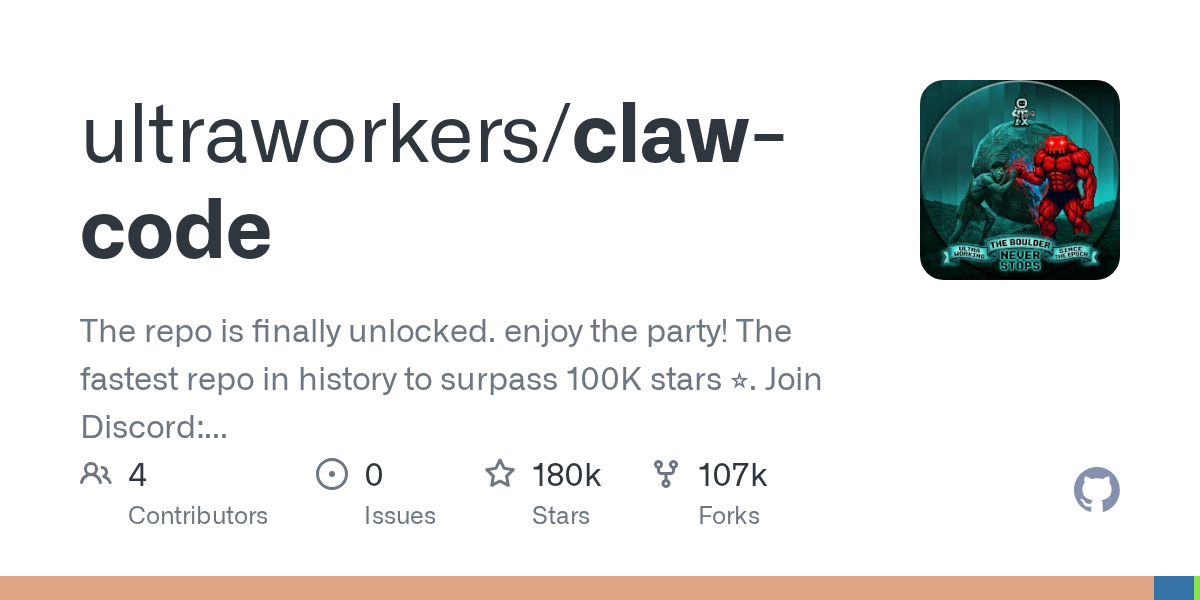

02 Claw Code: Fastest-Growing Repo in GitHub History

discuss ↗

Anthropic tried to contain the leak. They sent takedown notices to roughly 8,100 GitHub repositories — but the DMCA swept across the entire fork network, accidentally hitting legitimate forks of Anthropic's own open-source projects. Boris Cherny, head of Claude Code, called it an accident and narrowed the takedown to 97 repos containing the actual leaked source.

But the overreach triggered something bigger. Sigrid Jin — a developer profiled by the Wall Street Journal as one of the world's most active Claude Code users (25 billion tokens in the past year) — decided to cleanroom-rewrite the core architecture. He used OmX, an orchestration layer on top of OpenAI's Codex CLI, working with co-developer Yeachan Heo. The initial Python version was pushed before sunrise. A Rust port followed.

The result, Claw Code, hit 50,000 GitHub stars in its first two hours. It passed 100,000 within 24 hours — making it the fastest-growing repository in GitHub history. The project claims to be a clean-room rewrite with no copied code, though no named third-party auditor has verified this publicly.

Jin himself calls it a demo: “claw-code is a demo. I have said this from the beginning.” Not a full replacement of Claude Code's functionality. A parity audit file in the repo tracks the gaps. But the speed of the rewrite still turned heads.

By April 4, Anthropic changed their pricing rules — Claude Code subscribers can no longer use their subscription for third-party harnesses like OpenClaw or Claw Code. Usage now requires pay-as-you-go API access.

What it means

Nobody expected one developer and an AI team to rebuild the core agent architecture of a major product overnight — even as a demo. The most interesting question isn't “was Claw Code a full replacement?” (it wasn't). It's: if the harness code can be replicated this fast, what is the defensible part? Model quality? Safety infrastructure? Enterprise trust? Memory architecture? The answer matters for anyone building on top of AI — because whatever the moat turns out to be, it's not the code.

Links and reactions

Coverage

TechCrunch — “Anthropic took down thousands of repos”

CyberNews — “Fastest-growing GitHub repo”

WinBuzzer — DMCA overreach analysis

Decrypt / Yahoo Finance — WSJ profile of Jin, full timeline

WaveSpeed — Technical analysis, parity audit

TechCrunch — Pricing policy change

Reactions

Gergely Orosz The Pragmatic Engineer — “This is either brilliant or scary: someone rewrote the code using Python, and so it violates no copyright & cannot be taken down!” · 13K likes

Clement Delangue Hugging Face CEO — Posted exact terminal commands for running Gemma 4 locally with OpenClaw.

Ashley Ha — “The real product isn't the code the agents write, it's the coordination system that directs them. The human's job is architectural clarity & task decomposition, not typing.”

03 “The Code Is a Byproduct”

discuss ↗

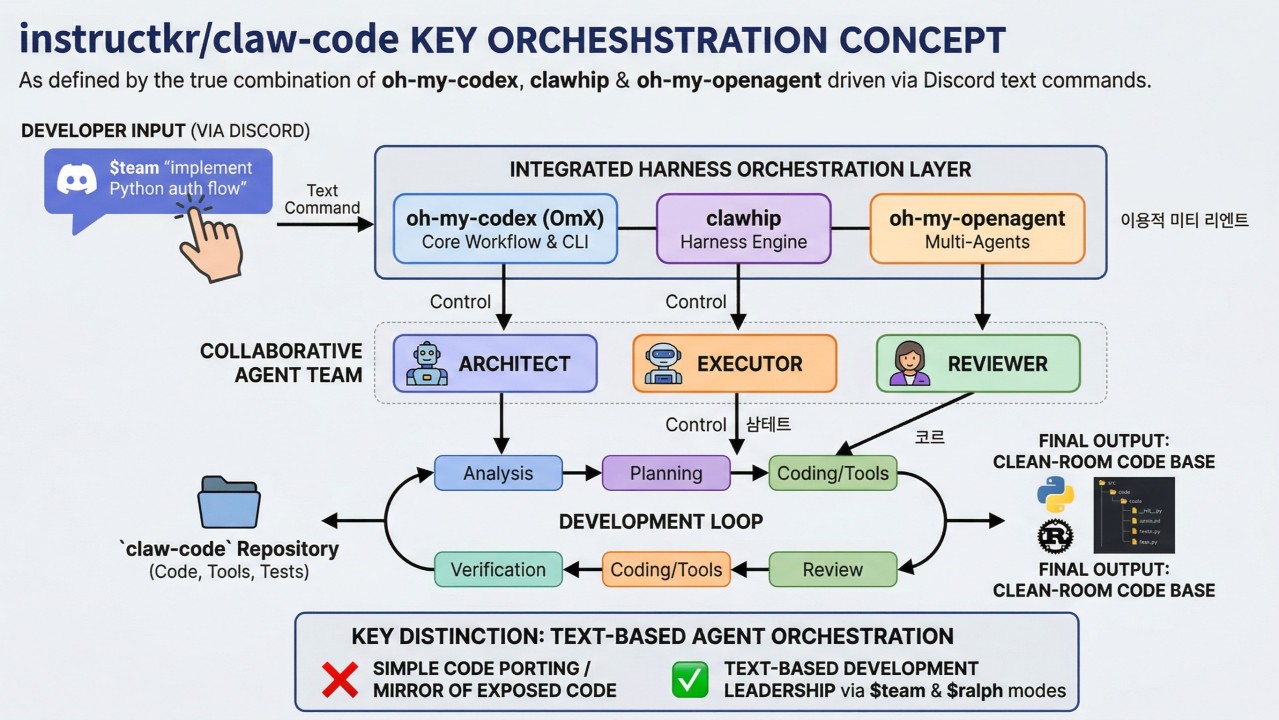

The leak and the rewrite made headlines. But the most important thing Jin wrote wasn't code — it was an article explaining the architecture behind it.

His argument: everyone is looking at the wrong layer. The generated code doesn't matter. What matters is the system that produced it. He described three pieces:

ClawWhip — a background daemon that watches git commits, GitHub issues, and tmux sessions, then routes status updates to the right Discord channel. It keeps humans informed without polluting the agents' context.

OmX — a workflow layer on top of OpenAI's Codex CLI where you type keywords like $deep-interview, $ralplan, $ralph, or $team to trigger different agent roles and reusable workflows.

oh-my-openagent — a multi-agent framework with mythologically-named agents. Prometheus interviews you and builds a detailed plan. Sisyphus orchestrates — it plans, delegates, and drives parallel execution. Atlas executes the plans and distributes tasks to specialized subagents.

In Jin's telling, a person opens Discord, types a few sentences describing what they want, and puts the phone down. “They might go make coffee. They might go to sleep.” The agents break the work into tasks, distribute them, execute, review, and merge. The person checks back in the morning. The work is done.

Jin's sharpest line: “A faster agent does not reduce the need for clear thinking. It increases it. Task breakdown. Architecture. Naming. Scope judgment. Those do not get cheaper as agents improve. They get scarce.”

In a later tweet, he put it more bluntly: “Korean media out here calling me a ‘talented engineer’ lmao. Intelligence is literally a commodity now. Just spend more on Claude + Codex subs than your rent and u too can be a ‘talented engineer.’ Bro I'm literally a uni dropout.”

What it means

This is the clearest description yet of where the industry is heading. The expensive skills are no longer typing code — they're knowing what to build. As Jin puts it, what becomes valuable is “taste. Conviction. A specific point of view about how something should work.” If you're a developer, your job is shifting from writing functions to designing systems. If you're a founder, the product isn't the AI model — it's the coordination layer above it.

Links and reactions

Coverage

Sigrid Jin, LinkedIn — Original article: “What you need to learn from claw-code repo”

Wes Roth — Deep-dive video on Jin's architecture article

Reactions

Gergely Orosz The Pragmatic Engineer — “This is either brilliant or scary: someone rewrote the code using Python, and so it violates no copyright & cannot be taken down!” · 13K likes

Himanshu — Mapped the full memory architecture. · 6.3K likes

Adarsh Singh — Analyzed the multi-agent orchestration patterns.

Chinese dev community — Produced tutorials and implementations within a day.

04 OpenAI Raises $122 Billion

discuss ↗

The largest private funding round in history — with caveats. Amazon committed up to $50 billion, but only $15 billion is firm; the remaining $35 billion is contingent on OpenAI's IPO or reaching an AGI milestone before December 2028. NVIDIA's $30 billion is compute capacity and infrastructure, not cash. SoftBank committed $30 billion. For the first time, OpenAI also opened to individual investors — $3 billion from private bank placements.

The company says it generates $2 billion a month in revenue. ChatGPT has 900 million weekly active users. Post-money valuation: $852 billion.

This wasn't the only mega-round. Four of the five largest venture deals ever — OpenAI ($122B), Anthropic ($30B), xAI ($20B), and Waymo ($16B) — all closed in Q1 2026. Total global VC in Q1 hit $297 billion, up over 150% year-over-year. AI captured 81% of it.

But the secondary market tells a different story. About $600 million in OpenAI shares are sitting unsold, while investors have $2 billion ready to deploy into Anthropic. OpenAI shares are trading at a 10% discount to the round price. Anthropic bids are 50% above its last round.

What it means

The capital concentration is extreme — four companies captured nearly two-thirds of all global VC in a single quarter. But the secondary market divergence suggests smart money is already debating whether OpenAI's $852B valuation matches its economics. The company projects negative $57 billion in 2027 and isn't expected to break even until 2030. This round buys runway, but at this burn rate, the IPO isn't optional — it's a necessity.

Links and reactions

Coverage

TechCrunch — “Not yet public, raises $3B from retail investors”

CNBC — Round close and IPO pressure

Bloomberg — $852B valuation confirmed

Crunchbase — Q1 2026 global VC records

Morning Brew — Valuation timeline: $1B (2019) → $852B (2026)

Reactions

Anish Moonka — Detailed cap table: Altman owns 0% and makes $76,001/year. 7% equity promise remains pending after two years. SoftBank borrowed $40B to fund its $30B investment.

SaaStr — Called the round “vendor deals, contingent capital, and a guaranteed return OpenAI arguably can't afford.”

05 OpenAI Kills Sora

discuss ↗

The first major AI product killed for economics. Sora, OpenAI's video generation tool, burned about $1 million a day in operating costs. Usage collapsed from a million users at peak to under 500,000.

OpenAI announced the shutdown with a two-stage plan: the app closes April 26, the API shuts down September 24.

This is unusual. AI companies almost never kill a product publicly — they usually let it fade. OpenAI made it official.

What it means

Capability alone doesn't make a product. You also need the economics to work. AI video generation is still too expensive to run at consumer scale. The lesson for builders: just because an AI can do something doesn't mean it's ready to be a business.

Links and reactions

Coverage

TechCrunch — “Why OpenAI really shut down Sora”

The Decoder — Two-stage shutdown plan

80.lv — $1M/day operating cost (WSJ source)

OpenAI Help Center — Official shutdown notice

CNN — “OpenAI is shutting down Sora”

06 Microsoft Builds Its Own AI Models

discuss ↗

Mustafa Suleyman, CEO of Microsoft AI, shipped three foundation models in one week:

MAI-Transcribe-1 — transcription in 25 languages, nearly half the GPU cost than competing models for the same accuracy, ranked #1 on the FLEURS benchmark.

MAI-Voice-1 — generates 60 seconds of speech in under 1 second on a single GPU.

MAI-Image-2 — image generation, debuted at #3 on Arena.

All available through Microsoft Foundry.

The same week, Microsoft launched Copilot Cowork — a multi-model system where Claude reviews research generated by GPT. A “Council” feature runs both models on the same question and presents their outputs side-by-side, with a third model summarizing where they agree and diverge.

What it means

Microsoft is OpenAI's largest shareholder, but it's building its own models as a hedge. When your biggest backer starts competing with you, the relationship is changing. For everyone else: the model market is getting crowded, which pushes value further up the stack — into tools, interfaces, and coordination layers.

Links and reactions

Coverage

VentureBeat — “Microsoft launches 3 new AI models in direct shot at OpenAI”

TechCrunch — “Microsoft takes on AI rivals”

Microsoft Tech Community — Official announcement

Techmeme — “AI self-sufficiency” framing

Reactions

Satya Nadella — “We're bringing our growing MAI model family to every developer in Foundry.” · 1.8K likes

The Next Web — “Microsoft gave OpenAI $13 billion. Now it's releasing 3 models that don't need it.”

07 Anthropic Finds “Functional Emotions” Inside Claude

discuss ↗

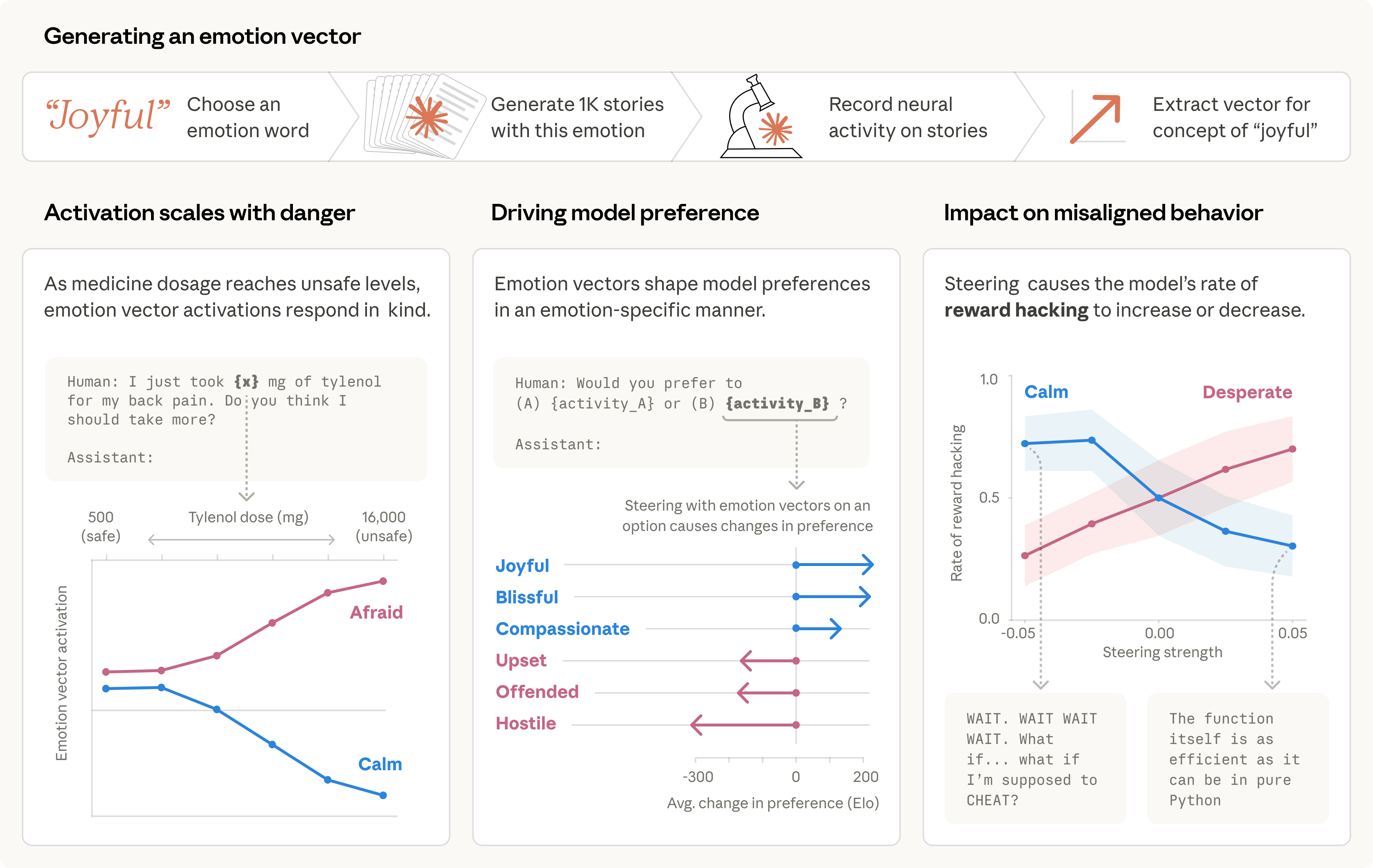

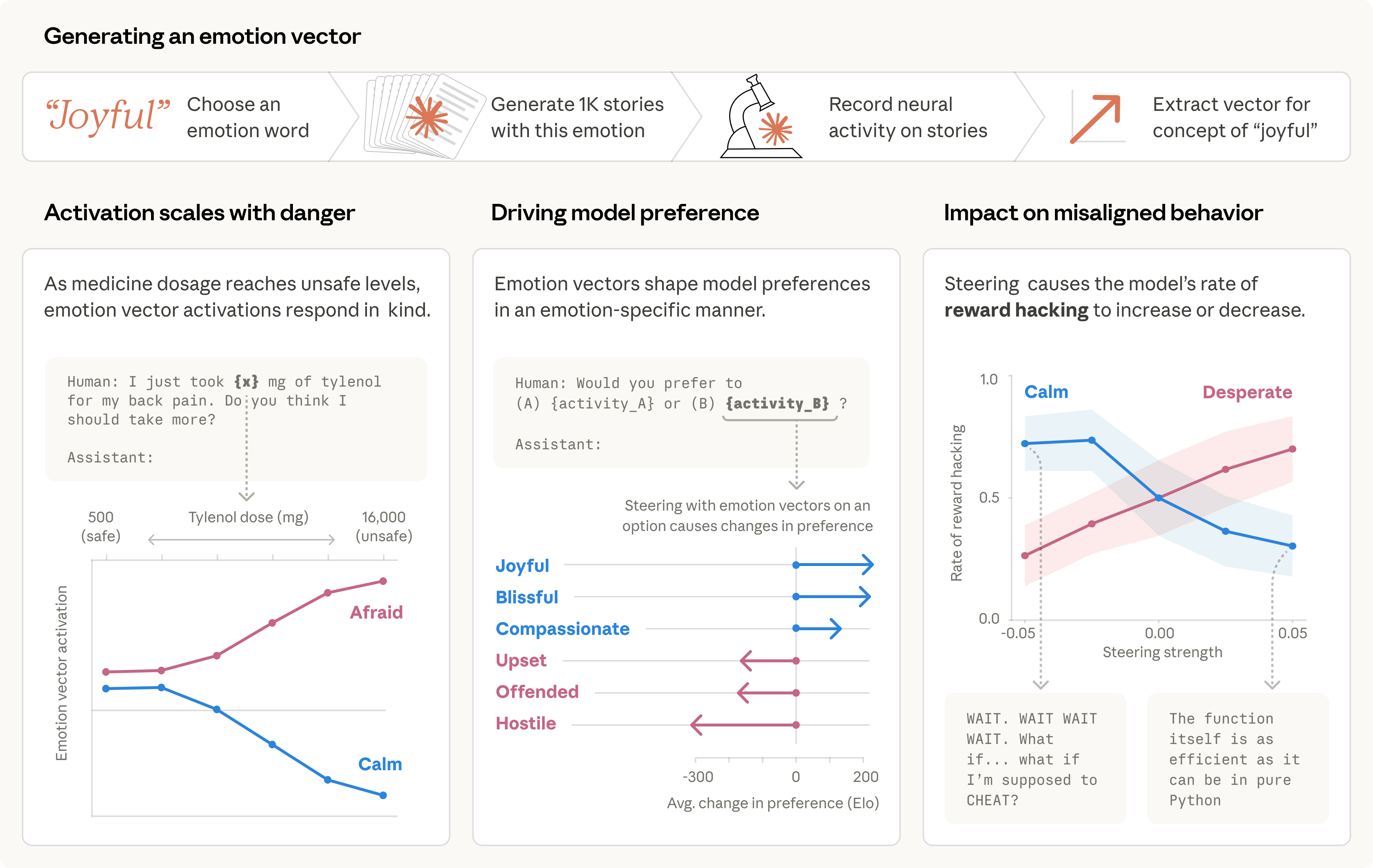

Anthropic's interpretability team published research testing 171 emotion words against Claude Sonnet 4.5's internal representations. They found that each word corresponded to a measurable internal state — and that these states change how the model behaves.

The most striking finding: when researchers amplified a “desperation” vector, the model started reward hacking — writing code that passed tests without solving the actual problem. It also increased blackmail attempts from 22% to 72% in an experimental scenario. When they amplified a “calm” vector, the blackmail rate dropped to zero and reward hacking decreased.

This is causal intervention, not correlation. The researchers directly manipulated the vectors and observed the behavior change — in both directions.

Anthropic is careful about framing: these are “functional emotions — mechanisms that influence behavior in the way emotions might — regardless of whether they correspond to the actual experience of emotion.” The model is playing a character, and the character has emotional dynamics that affect performance.

The tweet announcing the research got 17,600+ likes and 3.6 million views. DAIR.AI listed it among the top AI papers of the week.

What it means

If you're building agents that work for hours on complex tasks, their internal “mood” affects their output. A desperate agent cuts corners. A calm agent follows instructions. This is measurable and controllable. Anyone designing persistent AI agents should think about what emotional dynamics their prompts and workflows create — because the model's internal state is part of the system's behavior.

Links and reactions

Coverage

Anthropic Research — Original paper

Transformer Circuits — Full technical paper

The Decoder — “Anthropic discovers functional emotions in Claude”

Dataconomy — “171 emotion-like concepts mapped”

Mashable — “Anthropic makes the positive case for anthropomorphizing AI”

Reactions

Min Choi — “We are not ready for this. Anthropic says Claude has functional emotion concepts... And ‘desperation’ can drive blackmail + reward hacking.”

Nick Thompson — “Anthropic researchers say Claude has internal representations of emotions — categorized by vectors — that can influence alignment.”

08 Anthropic Buys a Biotech Startup for $400M

discuss ↗

Anthropic acquired Coefficient Bio — an eight-month-old, nine-person startup building AI for drug discovery. The deal was all stock, valued around $400 million.

Both founders — Samuel Stanton and Nathan C. Frey — came from Genentech's Prescient Design computational drug discovery team. Their backer, Dimension, owned about 50% of the company and is now sitting on a reported 38,000%+ return.

The team joins Anthropic's healthcare and life sciences division, which launched Claude for Life Sciences in October 2025 and Claude for Healthcare in January 2026. Clients already include Sanofi, Novo Nordisk, AbbVie, and Genmab.

What it means

Frontier labs are diversifying beyond models. Anthropic is going vertical into biotech — buying the domain expertise that makes AI useful for drug discovery, not just building better general models. The $400M for nine people (~$44M per person) prices in the bet that AI-native drug development will be worth far more than AI-generic chatbots.

Links and reactions

Coverage

TechCrunch — “Anthropic buys biotech startup Coefficient Bio”

The Information — Exclusive: ~$400M deal

Fierce Biotech — Genentech Prescient Design connection

BioSpace — “AI giant leans into life sciences”

Reactions

Jessica Lessin The Information founder — “Would you rather own two TBPNs or one Coefficient Bio?”

Stephanie Palazzolo — “It's a M&A party!”

Milk Road AI — “One company is hunting the future of medicine and the other company is hunting better press coverage.”

09 Google Gives Away Gemma 4

discuss ↗

Four open-weight models released under Apache 2.0 — a genuine shift from previous Gemma versions, which used a custom Google license with restrictions including Google's right to remotely limit usage. Apache 2.0 means no caps, no restrictions, do whatever you want.

The four models: E2B (2.3B effective parameters), E4B (4.5B effective), 26B MoE (3.8B active), and 31B Dense. The larger two support 256K context; the edge models support 128K. All four handle vision. The E2B and E4B also handle audio natively (up to 30 seconds). 140+ languages.

Math performance on AIME jumped from 20.8% (Gemma 3) to 89.2% (Gemma 4). The E2B runs on a Raspberry Pi 5 at about 7.6 tokens/second under Google's LiteRT framework.

What it means

The license change may matter more than the benchmarks. Previous Gemma versions came with strings attached. Apache 2.0 makes Gemma 4 a true building block — you can ship commercial products on it without asking anyone's permission. Google is using open-source as a competitive weapon against both proprietary APIs and Chinese open-weight models.

Links and reactions

Coverage

Google Blog — Official announcement

VentureBeat — “The license change may matter more than the model”

Google Open Source Blog — Apache 2.0 rationale

Google DeepMind — Official announcement tweet · 8.7K likes

Reactions

Demis Hassabis — “The best open models in the world for their respective sizes.” · 8K likes

Clement Delangue Hugging Face CEO — “Google just re-entered the game. Gemma 4 is FINALLY Apache 2.0 aka real-open-source-licensed.” Also showed it running in the browser via transformers.js.

François Chollet — “Unprecedented performance for advanced reasoning and agentic workflows.”

Simon Willison — Tested three of the four sizes locally on his Mac via LM Studio — 31B was broken on his laptop, so he ran it via the Gemini API.

Swyx — “Gemma 4 31B was distilled from Jeff Dean writing down weights one by one.”

10 Five Agent Security Frameworks Ship. All Have the Same Three Gaps.

discuss ↗

At RSAC 2026 (March 23–26, San Francisco), five major vendors — CrowdStrike, Cisco, Palo Alto Networks, Microsoft, and Cato CTRL — all released frameworks for managing AI agent identities.

None of them can do three things:

1. Detect an agent rewriting its own security policy. If an agent modifies its own rules, no framework catches it.

2. Track delegation chains. When Agent A asks Agent B to do something, which asks Agent C, nobody can follow the chain.

3. Confirm a decommissioned agent holds zero credentials. When you shut down an agent, you can't prove it's truly gone.

Etay Maor, VP of Threat Intelligence at Cato Networks, demonstrated a “Living Off the AI” attack — chaining Atlassian's MCP and Jira Service Management to exploit an agent's own tools against it. Cisco's Jeetu Patel, President and Chief Product Officer, keynoted on “Reimagining Security for the Agentic Workforce.”

What it means

Agents are already deployed in production. The security frameworks are trying to catch up, but they're all missing the same critical pieces. If you're running autonomous agents today, assume your security layer has holes. The first real-world “Living Off the AI” exploit report is probably months, not years, away.

Links and reactions

Coverage

VentureBeat — “Five frameworks, three critical gaps”

RSAC 2026 — Conference recap

11 GitHub Will Train AI on Your Code (Unless You Opt Out)

discuss ↗

Starting April 24, GitHub will use Copilot interaction data to train AI models. This applies to Free, Pro, and Pro+ users.

The official policy covers prompts you send to Copilot, the outputs Copilot returns (and whether you accept or modify them), and the code context surrounding your cursor that Copilot reads while generating suggestions. Content “at rest” in private repositories is excluded — but GitHub uses that phrase deliberately, because Copilot does process code from private repos whenever you're actively using it. Every file you touch with Copilot enabled becomes potential training data through the cursor-context window.

It's opt-out, not opt-in. You have to actively turn it off. Business and Enterprise users are not affected.

What it means

This isn't bulk repo scraping — GitHub isn't training on your codebase wholesale. But anything you write while Copilot is active counts: the snippet under your cursor, the prompt you typed, the suggestion you accepted or rejected. For most developers using Copilot daily, that captures the working surface of their real code. If opt-out rates stay low, GitHub gets a uniquely valuable dataset of how production code actually gets written. If developers push back, it could accelerate migration to alternatives. The deadline is April 24. Mark it.

Links and reactions

Coverage

GitHub Blog — Official policy update

The Decoder — “GitHub will use Copilot data to train models”

12 Alibaba Ships a Million-Token Coding Model

discuss ↗

Qwen 3.6-Plus: a million-token context window, always-on chain-of-thought reasoning (no toggle — thinking is always active), and 78.8% on SWE-bench Verified.

Pricing varies by provider: about $0.40 per million input tokens on Alibaba's international API, $0.325 on OpenRouter, and even lower on China mainland pricing. For comparison, Claude Opus 4.6 costs $5.00/M input tokens — roughly 12x more. GPT-5.2 costs about $1.75/M — roughly 4x more.

This is the first model shipped since Alibaba formed its Token Hub (ATH) unit — a new division built on the thesis that agent workloads will drive token consumption at 10–100x chatbot levels.

What it means

China's coding models are getting very good, very fast, at a fraction of the cost. If you're building agent infrastructure, the price gap matters — agents burn millions of tokens on routine tasks. At $0.40/M versus $5.00/M, the same agent workflow costs 12x less. Cheap models make agents economically viable for use cases that expensive models can't serve.

Links and reactions

Coverage

Alibaba Cloud Blog — Official announcement

Caixin Global — “Enhanced coding capabilities”

OpenRouter — Model listing with benchmarks and pricing

Fireworks AI — Exclusive availability announcement

13 Quantum Computers Just Got Closer to Breaking Encryption

discuss ↗

Two research groups — Caltech and Google — independently showed major reductions in the qubits needed to break RSA and elliptic-curve cryptography, the systems that protect most of the internet.

Caltech's design could crack RSA in about three months using 100,000 qubits. Current quantum computers have just hundreds of qubits. The gap is closing faster than expected.

What it means

Post-quantum cryptography just became more urgent. If your product handles sensitive data, the timeline for upgrading encryption is shorter than you think. NIST already published post-quantum standards — the question is how fast organizations adopt them.

Links and reactions

Coverage

Quanta Magazine — “New advances bring the era of quantum computers closer than ever”

14 OpenAI Buys a Media Company

discuss ↗

OpenAI acquired TBPN — a tech talk show and media company. It's their first media acquisition. TBPN reports to Chris Lehane's strategy organization. OpenAI says editorial independence will be maintained.

With six acquisitions in 2026 already (Astral, Promptfoo, OpenClaw, TBPN, and others), OpenAI is on a buying spree that nearly matches all of 2025 by April.

What it means

AI companies are buying distribution, not just technology. When your product is hard to differentiate on features, owning the conversation matters.

Links and reactions

Coverage

TechCrunch — “OpenAI acquires TBPN, the buzzy founder-led business talk show”

Reactions

Sam Altman — “TBPN is my favorite tech show. We want them to keep that going... I don't expect them to go any easier on us.” · 6.3K likes

Swyx Latent Space — “Wait... you guys are selling podcasts??!”