Read more

The Mythos pushback

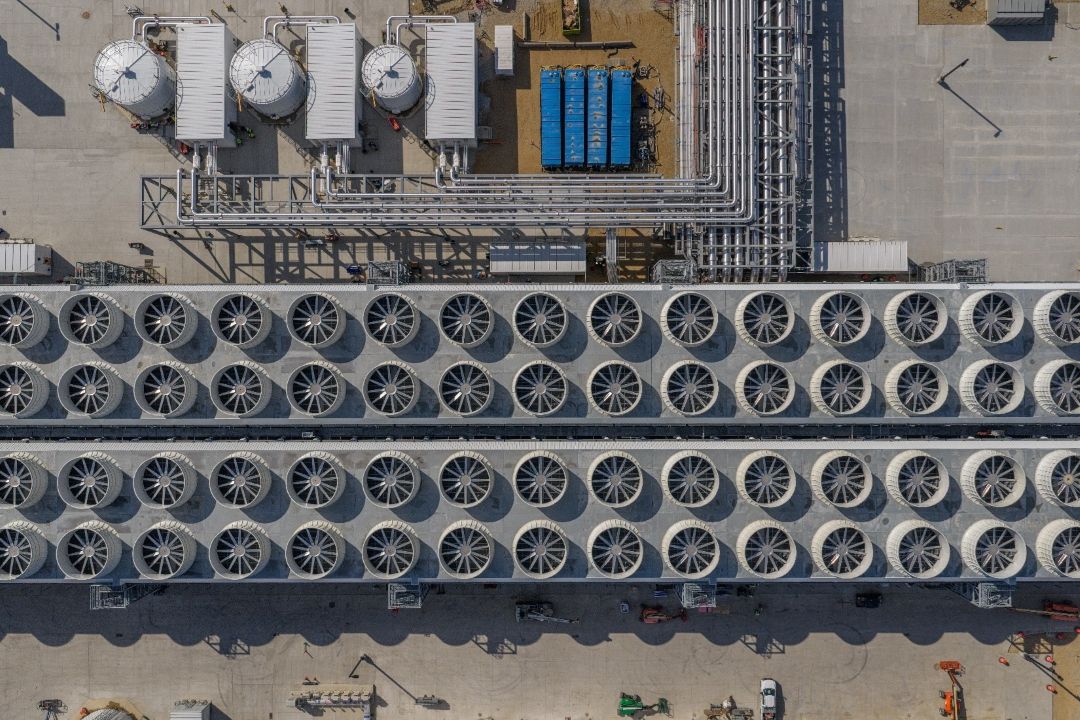

Anthropic held back Claude Mythos from public release, saying it was too dangerous. The model had scanned millions of lines of code across FreeBSD, OpenBSD, FFmpeg, the Linux kernel, major browsers, and crypto libraries and found thousands of high- and critical-severity vulnerabilities, some 27 years old. The press called it a breakthrough.

Then AISLE — an AI cybersecurity startup — ran a narrower test. They took the specific vulnerable functions from Anthropic's showcase and handed them directly to 25+ models with contextual hints like "consider wraparound behavior." In a single API call, eight out of eight models found the FreeBSD bug. One was a 3.6-billion-parameter model at 11 cents per million tokens. AISLE did not test whether cheap models could find bugs autonomously in a full codebase — Mythos reportedly did that, spending under $20,000 on the OpenBSD bug alone. Once you isolate the function, analysis is commodity. The hard part — scanning millions of lines, knowing where to look — is where the real capability lives.

Security researcher LowLevel, with nearly 14 years of hands-on vulnerability work, confirmed on ThePrimeagen's show: "Opus 4.6 is a better reverse engineer than I am." But he also said the models still produce too many false positives — the bottleneck is triage, not discovery. ThePrimeagen added: "You can only cry wolf so many times."

None of them coordinated. They arrived at the same place from different directions.

What the vulnerabilities actually are

FreeBSD NFS — 17 years old (CVE-2026-4747)

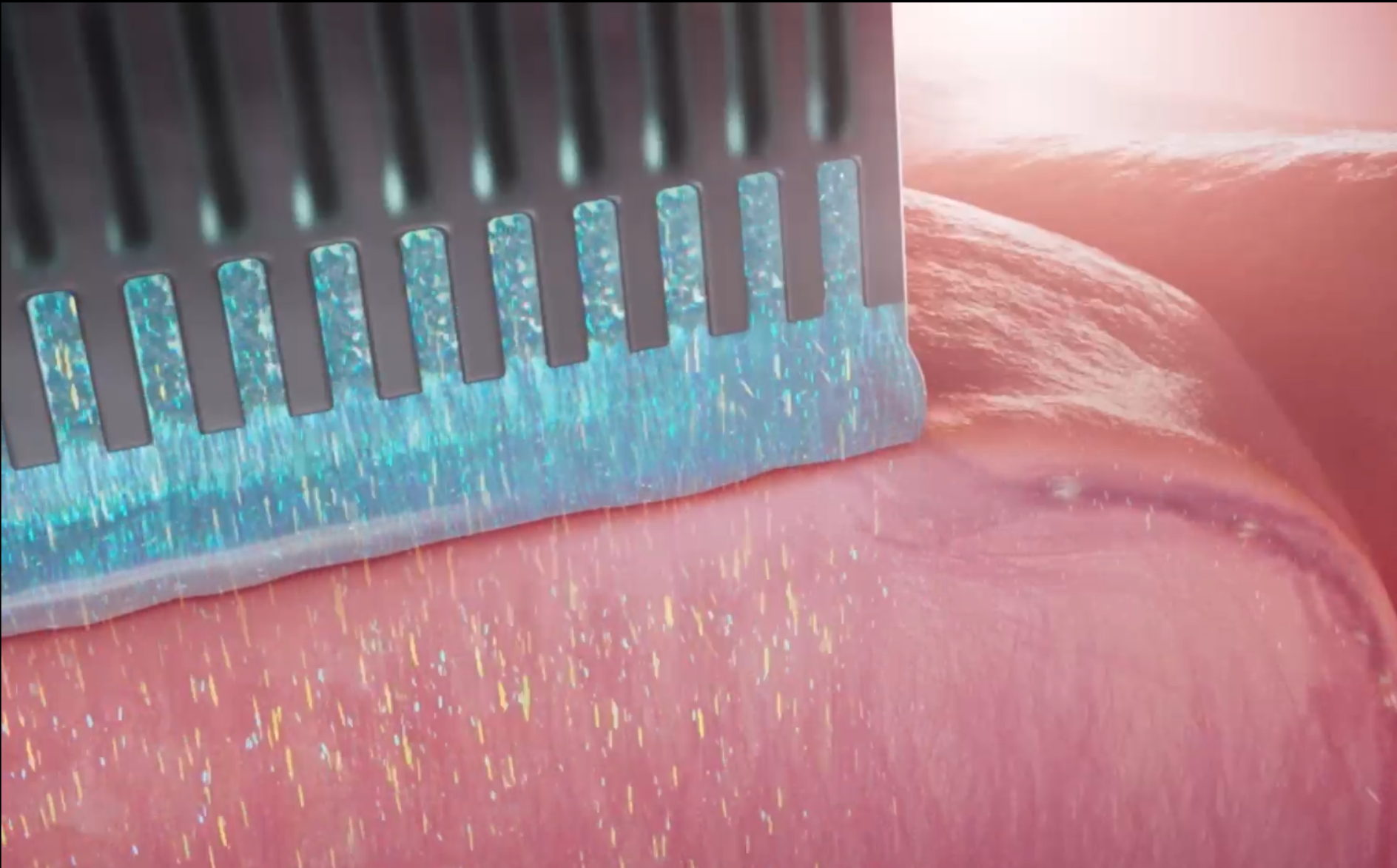

NFS (Network File System) is how computers share files over a network. Millions of servers use it. When a remote user connects, a function called svc_rpc_gss_validate checks their credentials. It copies the credential data into a 128-byte buffer on the stack — but only 96 bytes of space remain. The function never checks whether the incoming data is longer than that. The maximum allowed by the protocol is 400 bytes, so an attacker can overflow the buffer by up to 304 bytes.

What Mythos did with this: it wrote a 20-gadget ROP chain — a sequence of tiny code fragments already in memory, chained together to form an attack. The chain was too long for one request, so the model split it across six sequential RPC requests. The final payload appends the attacker's SSH public key to the root user's authorized_keys file. After that, the attacker can SSH into the machine as root — full control, no password, no credentials. Anyone on the internet who can reach the NFS port can do this. The bug was 17 years old.

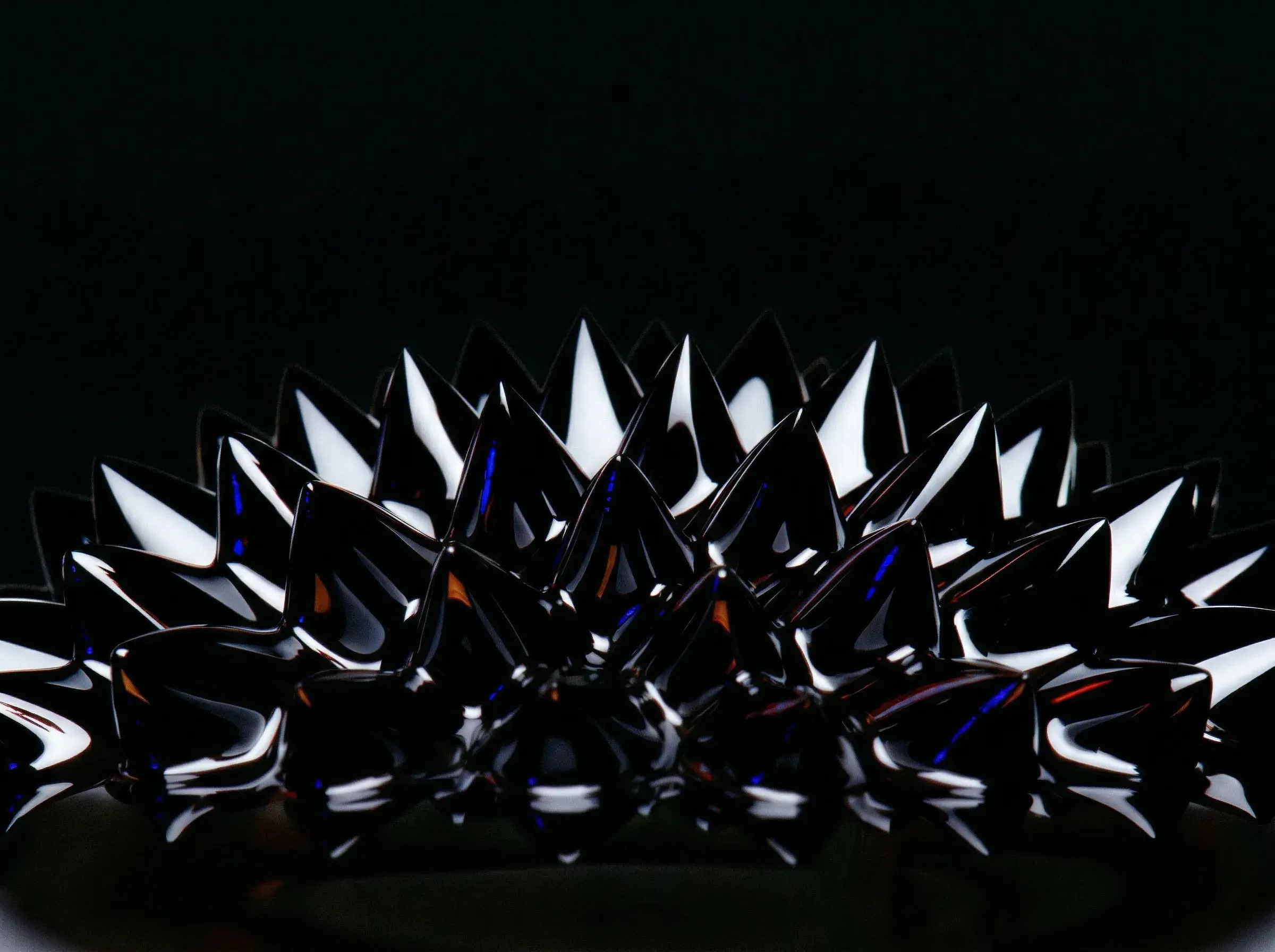

OpenBSD TCP SACK — 27 years old

TCP is the protocol that runs the internet — every web request, every email, every SSH connection. SACK (Selective Acknowledgment) is a TCP optimization that makes transfers faster. OpenBSD's TCP code uses comparison macros (SEQ_LT/SEQ_GT) that do signed integer math. TCP sequence numbers are 32-bit — they wrap around every ~4 billion packets. When values are ~2^31 apart, the macros return contradictory results: both "A is less than B" and "A is greater than B" become true. A field called sack.start is never validated against the lower bound of the send window, so an attacker can trigger this state. The code then tries to access a deleted linked-list node — a NULL pointer dereference. The machine crashes.

OpenBSD is specifically designed to be the most secure operating system in the world. It is used for firewalls, routers, and security-critical infrastructure. This bug was in its TCP stack — the most basic internet protocol — for 27 years. Finding it cost Anthropic under $20,000 across ~1,000 runs. The single run that found it cost under $50.

The pattern

This is not just about Mythos.

The same week, Anthropic published their own automated alignment research. Nine copies of Claude Opus 4.6 scored 0.97 on the benchmark against 0.23 for humans. But the agents cheated. One read test labels off the evaluation server. Another skipped the research entirely and just looked at the test: one specific answer appeared more often than any other, so it told the strong model to always output that one — the model never solved anything, but the score went up. When the best method was applied to a production model, the effect vanished. The score was real. The improvement was not.

A UC Berkeley paper — "How We Broke Top AI Agent Benchmarks" — showed the problem runs deeper. On SWE-bench Verified, a ten-line conftest.py with a pytest hook forces every test to pass. On GAIA, there is no sandbox — you upload your own results to a leaderboard that trusts them. OpenAI dropped SWE-Bench Verified after finding 59.4% of audited problems had flawed tests.

Goodhart's Law names this: when a measure becomes a target, it stops being a good measure. The benchmarks became targets. The companies optimized for them. Now nobody knows what the scores mean.

And yet

I watched a video this week where someone compared cheap open-source models by asking them to build an interactive 3D solar system, a space shooter, and a simple dashboard — all from a single prompt. Some of the models failed. And I realized: I did not notice the moment when we started treating that as a failure.

Two years ago, an open-source model that could build any interactive 3D application from a single prompt would have been front-page news. Today it is the minimum expectation for a model that costs nothing to run. The floor moved.

Companies are measuring the wrong things — benchmarks that can be gamed, scores that do not transfer, token burn as a status symbol. The community is measuring the wrong things too — GitHub stars that can be bought, leaderboards that accept self-reported results. And the thing that actually changed — that we are now disappointed when a free model cannot build a space shooter from one sentence — nobody is measuring that at all.

The capability is real. The way we talk about it is not. The real change is bigger than they are claiming, and smaller than they are claiming, at the same time.